Why Does AI Hallucinate?

Causes of hallucinations and how to respond

Overview

AI hallucinates because it generates likely text, not verified facts.

Key Points

- Probability-based generation, not factual recall

- Training gaps can lead to fabricated content

- No built-in fact-checking

Use Cases

- Detect AI errors

- Verify AI answers

- Use AI safely

Common Pitfalls

- Trusting AI blindly

- Skipping verification for important info

- Relying on AI in expert domains

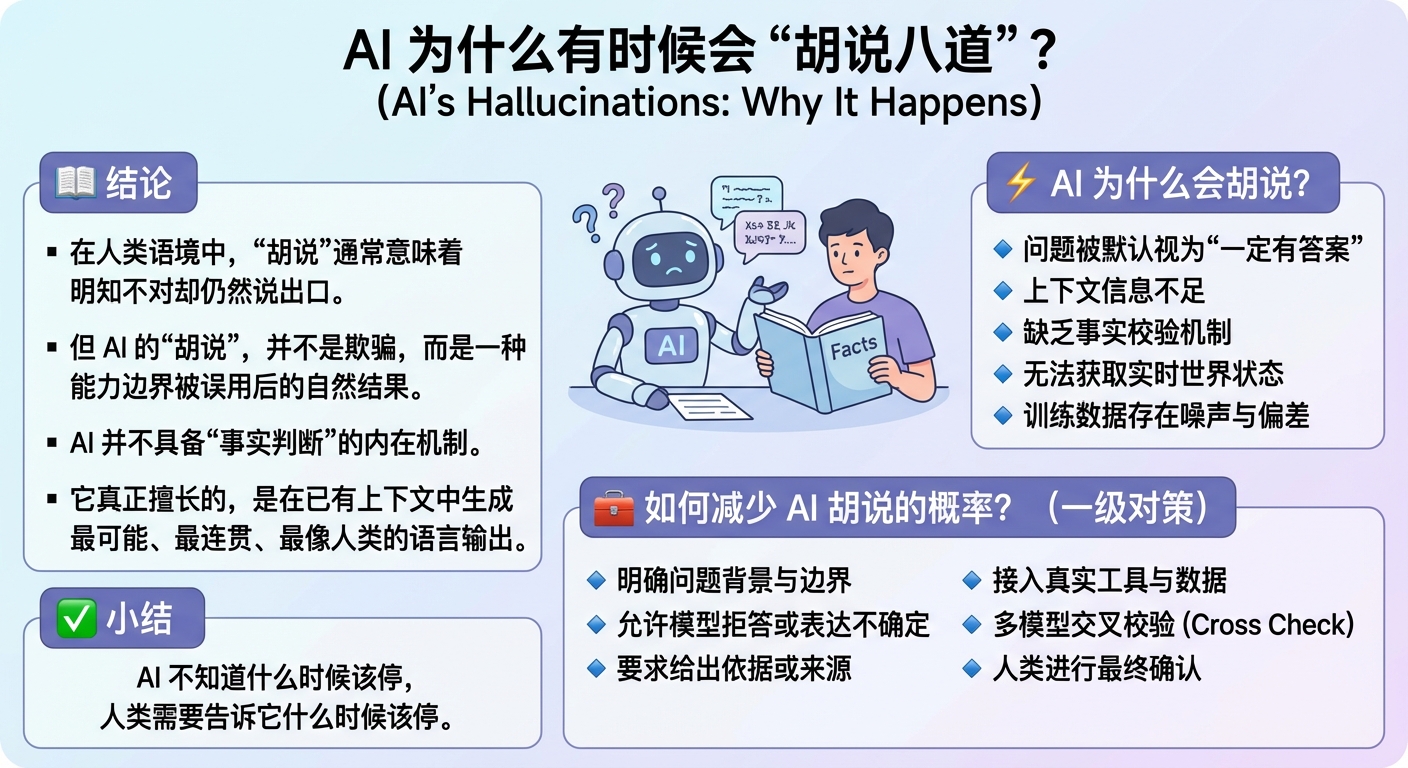

📚 Definition & Conclusion

In human language, “making things up” usually means saying something you know is false. But AI “hallucination” is not deception—it is the natural result of a system being used beyond its boundaries. AI has no built‑in mechanism for fact judgment. What it is actually good at is generating the most likely, most coherent, most human‑sounding output given the context.

⚡ Why Does AI Hallucinate?

🔸 Reason 1: The question itself has no definite answer

When reality has no single correct conclusion, AI will still produce an answer that looks reasonable. Example: “What is the internal strategy of a small private company?”

🔸 Reason 2: Context is incomplete, so AI guesses

When time, subject, or scope is unclear, AI fills in the most common version. Example: “When does this policy start?” (but no country or policy is specified)

🔸 Reason 3: AI does not verify facts by default

Unless asked or supported by tools, AI will not check whether an answer is true. Example: inventing a book title and author that sounds real.

🔸 Reason 4: No access to real‑time data

Without live systems, AI can only infer the present from outdated knowledge. Example: answering today’s stock price or breaking news with stale info.

🔸 Reason 5: Training data can be wrong

AI learns from human text, and human text can be inaccurate or outdated. Example: repeating a widely misreported “common knowledge.”

❓ Can Hallucination Be Eliminated?

Not completely—but it can be significantly reduced. The key is not to “make AI smarter,” but to provide clearer boundaries, context, and constraints.

🛠️ How to Reduce Hallucinations

🔹 State the context clearly

The more precise the time, subject, and scope, the less AI will fill in wrong details.

🔹 Allow AI to say “I don’t know”

Tell AI explicitly that uncertainty is acceptable, instead of forcing an answer.

🔹 Ask for evidence or sources

This sharply reduces unsupported expansions and fabrication.

🔹 Connect to real tools and data

When AI can query databases, live systems, or authoritative sources, guesswork drops.

🔹 Humans confirm critical conclusions

AI is a helper, not the final judge.

🔹 Cross‑check with multiple models

Ask different models the same question and compare results to catch errors.

✅ Summary

AI hallucinates not because it is “not smart enough,” but because it lacks human‑level responsibility for truth and real‑world constraints. More accurately: AI does not know when to stop—humans must tell it when to check, when to stop, and when to confirm.